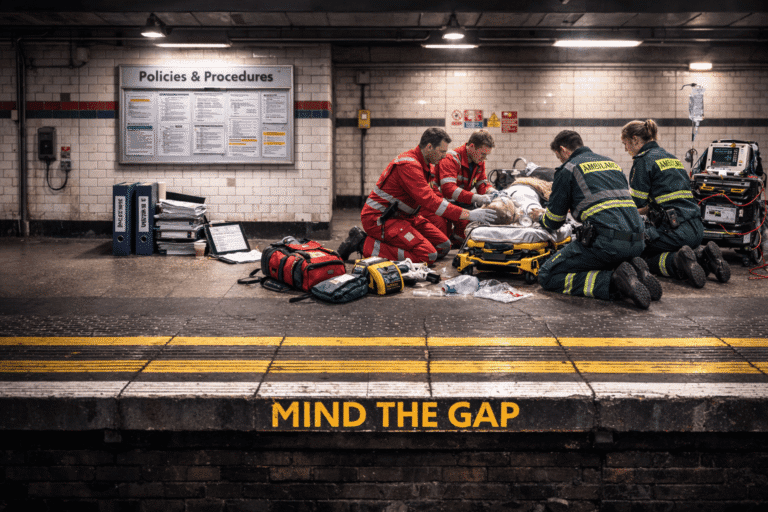

When Adaptation Becomes the Safety Strategy

Designing Clinical Systems for Pressure, Not Perfection

Most clinical systems are designed around how work is supposed to happen.

Processes are mapped.

Escalation pathways are defined.

Equipment is assumed to be used as intended.

On paper, it works.

In reality, clinical work rarely unfolds in tidy lines.

Healthcare happens amid interruptions, time pressure, missing information, variable staffing and imperfect environments. Whether in prehospital care, theatre, ICU, maternity, community services or retrieval, ideal sequencing gives way to what is actually possible.

So clinicians adapt.

They change task order.

They reposition equipment.

They anticipate friction before it appears.

They compensate for gaps that were never written into the protocol.

That adaptability is a strength.

But there’s a line we don’t talk about enough.

Resilience is a strength when it’s a buffer for the unexpected.

It becomes a hidden risk when it’s the only thing keeping routine care afloat day after day.

If your system only works because experienced people quietly compensate for its weaknesses, that isn’t robustness.

It’s fragility held together by effort.

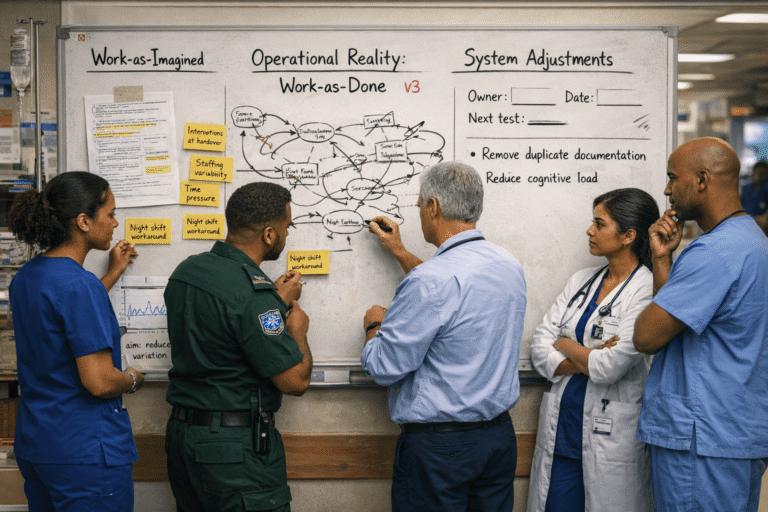

There will always be a difference between policy and practice. The issue isn’t that a gap exists. It’s whether it’s widening unnoticed.

Work-as-imagined lives in policies and flowcharts.

Work-as-done lives in real rooms with real constraints.

We’ve spent years chasing what goes wrong — and that still matters — but it misses the bigger story of what usually goes right under pressure.

A more useful question is this:

How does care usually go right despite pressure?

When you watch everyday work closely, you see small adjustments everywhere. Clinicians reorder tasks. They double-check verbally when the system doesn’t prompt them. They anticipate problems before they fully form.

That’s not rule-breaking. It’s how complex work survives.

But if we only measure incidents and compliance, we miss where those adjustments are compensating for design gaps.

That’s where risk starts to build — quietly, gradually, and often unnoticed.

Every workaround has a price.

It uses attention.

It uses memory.

It uses mental bandwidth.

Occasionally, that’s fine.

Repeated daily, it becomes structural.

Over time, teams stop noticing the adaptations. They become normal. New staff learn them through corridor conversations rather than through design.

When a workaround keeps recurring, that isn’t deviance.

It’s feedback.

The problem isn’t that people adapt. The problem is when safe performance depends on unwritten local knowledge.

Experienced staff often carry the invisible glue that holds things together. New or rotating clinicians pay a higher cognitive cost while they learn the unwritten rules.

A system that depends on tribal knowledge isn’t stable.

It’s just familiar.

Capacity is finite. When routine work consumes all the margin, there’s nothing left for the unexpected.

That’s when failure feels sudden — even though the warning signs were always there.

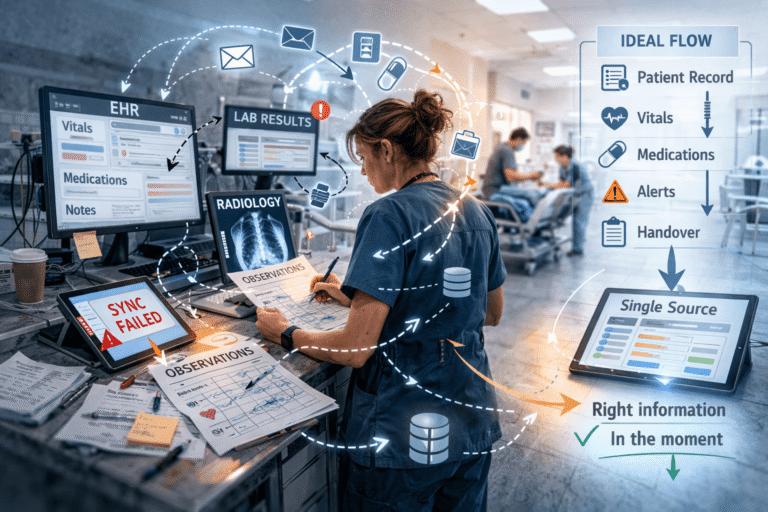

Digital tools were meant to make care clearer and more consistent.

Sometimes they do.

But often they are layered on top of existing workflows rather than designed around them.

Clinicians move between screens.

Information is entered more than once.

Documentation is finished later rather than captured in the moment.

Think of a busy ED shift: observations entered once on paper because the tablet won’t sync in the bay, then entered again later into the system. That duplication doesn’t disappear. It accumulates in someone’s head.

It’s not just about whether the interface works. It’s about whether the right information is easy to find and use under pressure.

If clinicians have to mentally stitch information together across multiple systems, that effort doesn’t disappear.

It sits in their head.

Every extra step between what happens and what gets recorded creates room for small shifts that make error more likely over time.

Technology doesn’t remove complexity. It relocates it.

The question isn’t whether the system functions.

It’s whether it fits how people actually think and act under pressure.

This isn’t about lowering standards.

It’s about aligning them with reality.

Take a routine clinical handover.

On paper: structured format, uninterrupted flow, complete information transfer.

In practice: interruptions, late arrivals, parallel documentation, missing data.

That gap is not a training problem. It’s a design problem.

Instead of asking, “Why didn’t they follow the structure?” ask:

“What makes it hard to follow consistently?”

Redesign might mean:

Protect the first two minutes from interruption

Align electronic documentation with the spoken structure

Remove duplicate data entry

Make key risks visually obvious

None of that reduces expectations.

It removes friction.

That’s system design.

When adaptability becomes the main safety mechanism, organisations are outsourcing resilience to clinicians.

Healthcare doesn’t fail because professionals stop caring.

It fails when systems quietly demand more than they were built to carry.

This isn’t about perfection.

It’s about responsibility — deciding whether pressure is absorbed by design or by people.

If high performance under pressure is the expectation, then designing for pressure is not optional.

Adaptability should be spare capacity — not the primary control mechanism.

System design and equipment design are not separate conversations.

Pressure shows up in workflows — but it also shows up in how information is structured, how controls are grouped, and how decisions are guided in the moment.

This piece explores how visual hierarchy, cognitive load and human-centred design shape performance under pressure — and how small design choices can either reduce friction or quietly amplify it.

Financial Disclosures

Unless otherwise stated at the top of the post, related parties have no relevant financial disclosures or conflict of interest.